From Agentic Phones to Vibe Interfaces

Let's skip past the Harness Engineering frenzy for a second — I hate the fact I have tens of wasted gigabytes on my laptop split over 3 VSCode Forks, 4 CLIs and 2 heavy desktop apps probably taking 60% in duplicate code. Let's talk about something bigger: how we'll actually use our technology in the near future.

My take? The grid of apps on my phone isn't going to last.

TL;DR

We're already moving from manually using apps to having AI do things for us. The problem is: phones aren't built for that yet.

Mobile computing will likely evolve in four steps:

- Clumsy screenshot-act loop agents.

- Agents that remember and optimize repeatable actions.

- OS-level "intents" (no UI needed) — might be built into the phone's OS.

- Apps turning into invisible capability bundles — basically everything is an MCP.

At the end of that path, the smartphone as we know it fades away. Interfaces shift to voice, glasses, and eventually brain-computer interfaces — where you don't even speak, you just intend. I call it "Vibe Interface (VI)"

The Mobile Bottleneck

AI agents are already pretty capable on desktops — they can run code, navigate systems, and actually do things for you.

Phones are a different story. iOS and Android are locked down. No root access, no deep system control. So if we want truly autonomous agents on mobile, the whole setup needs to change.

Phase 1: The Visual Scraper (aka the hacky phase)

The workaround is simple and ugly: treat the screen like the only source of truth.

- The agent takes screenshots, runs them through a vision model, figures out what's on screen, and taps where a human would tap.

What it feels like: You ask it to turn off your alarm, and it literally opens the Clock app and presses the button. You can watch it happen.

The problem: It's slow and fragile. No access to APIs (not even the mobile apps' API calls). Change the UI, and everything breaks.

Phase 2: Memory + Delegation

- A planning agent breaks down tasks

- A memory system records what worked

- Next time, it replays the steps instantly

What it feels like: On Monday, it figures out how to order your usual coffee. On Tuesday, it just does it — fast, in the background. Current-gen automation e.g. Android Tasker/iOS Tasks would enable this.

Multi-step tasks across apps stop being annoying.

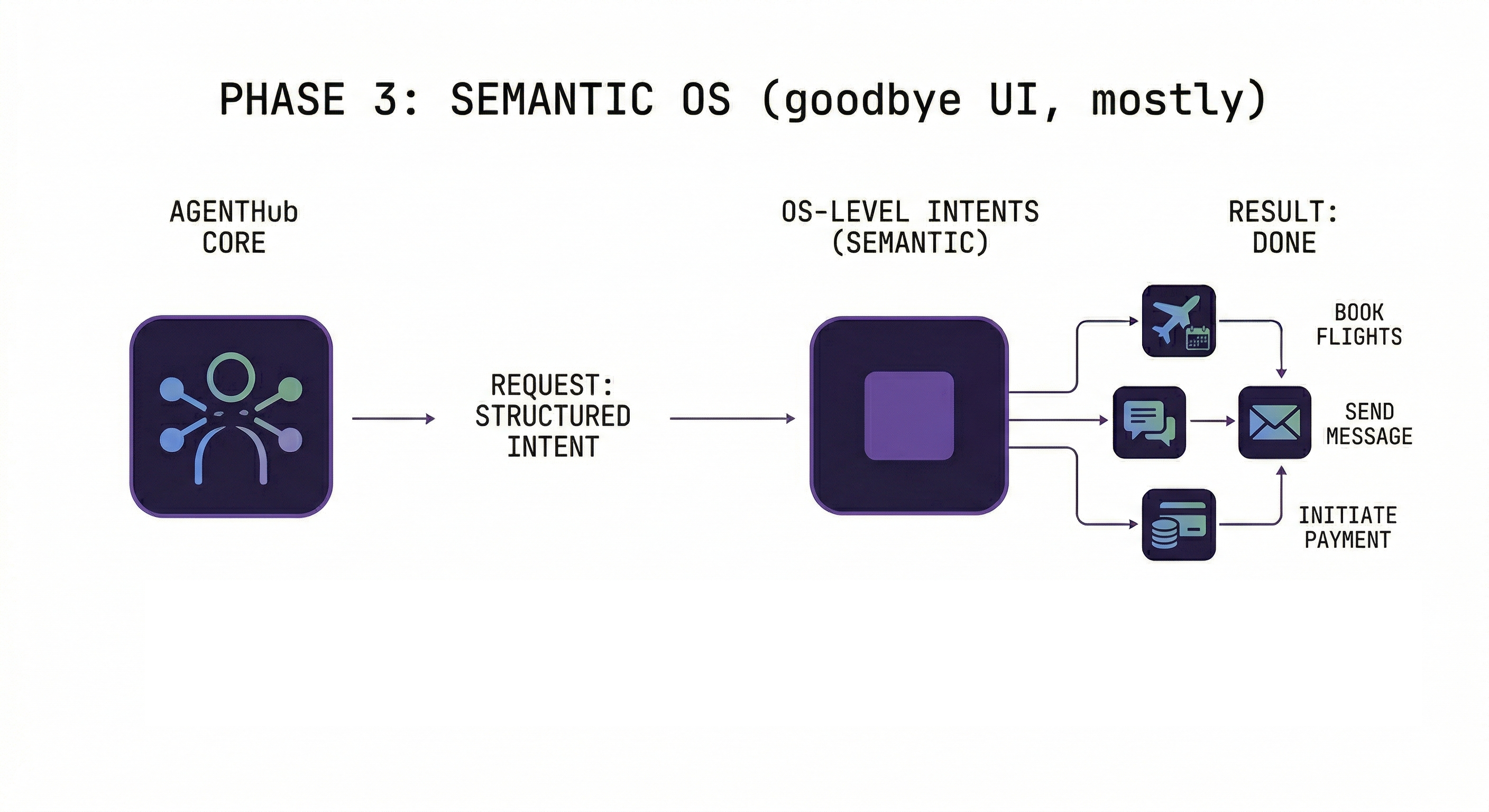

Phase 3: The Semantic OS (goodbye UI, mostly)

Instead of pretending to be a human tapping buttons, the agent talks directly to the OS.

- Apps expose "intents" (basically APIs)

- The agent sends structured requests — no UI needed

What it feels like: "Order my usual." Done. No app opens. No loading screen. Just happens.

I believe this is where Google is headed with Android (AICore Services & Gemini) and Apple with extending its App Intents, with early glimpses already in the Tasks App.

Phase 4: The Death of the App Icon

At this point, the idea of an "app" starts to break down. You're not installing interfaces anymore — you're installing capabilities.

- Each app becomes an Agent Manifest

- Your device runs an "Agent Command Center" in place of the mobile OS

- It coordinates everything behind the scenes and only surfaces something when necessary — confirmations, progress, completion

What it feels like: "Hey J.A.R.V.I.S. Plan me a weekend trip."

Your agent quietly pulls in flights, hotels, and restaurants — then gives you a finished plan. No app-switching, no tabs, no chaos.

In fact, Andrej Karpathy (I keep bringing him up) touched on that future briefly on the "No Priors" podcast - What's the point of conventional apps when an agent can act on your behalf?

Ditching the fingers

We've spent over a decade training ourselves to touch software. Swipe. Tap. Pinch. Pull to refresh. These aren't just gestures — they're muscle memory, and they give us something subtle but powerful: feedback.

You tap → something moves. You scroll → content flows. That constant visual response is comforting. It makes the system feel alive and under your control.

Now imagine removing all of that. Just: you ask, and then it's… done.

Our Attachment to the Smartphone

People don't just use smartphones. We identify with them — pick a side (Apple vs Samsung, I'm a Pixel fan), stick with an ecosystem for years, make the phone our wallet, camera, memory, and social life.

And then there's the anxiety. That moment you leave the house without your phone? The last time it happened to me, after coming to terms with it, I had one of my best days just experiencing everything else. But coming back home, I searched for it everywhere — first thing. The device isn't just a tool. It's a proxy for your entire digital existence.

The move to agent-driven systems minimizes interaction itself. Less manual control, fewer feedback loops, less of that satisfying "I did this" feeling. For some, that'll feel like freedom. For others, like giving something up.

We didn't just learn to use smartphones — we built habits, instincts, and identity around them. Ditching that won't happen overnight.

The Rise of "Vibe Apps"

If apps stop being interfaces and start being capabilities, a whole new marketplace shows up — a Vibe App Store.

Instead of downloading apps you open, you install Vibe Apps your agent can (re-)use: pre-built automations, agent instructions, connections to service backends. You don't interact with them directly. Your agent does. (Note: "Vibe App" is just another facade for the same thing: tool use, skill, MCP server, extension, plugin, the naming goes on..."

What it feels like:

Today: You open Skyscanner, set filters, scroll results, compare flights.

Tomorrow: "Find me flights for 2 people, Athens to Paris, next month, under €300." Your agent calls the Skyscanner Vibe App, passes your preferences, gets results, and books — if you approve.

Just like today's app ecosystem, you'd also get community-made content: power users sharing workflows, niche automations, personal productivity packs. Think Zapier + App Store + GitHub, but everything is intent-driven.

Safety Risk: If Someone Gets Your Unlocked Phone

Today, if someone grabs your unlocked phone, they can read messages and access some apps — damage is real but somewhat manual.

In an agent-driven world, your phone isn't just a gateway — it's an executor.

A capable manager agent with access to your tools can:

- Send messages to all your contacts instantly

- Move money, book travel, access services across apps

- Chain actions together — all through natural language

An attacker doesn't need technical skill. They just need to ask.

Two big differences from today:

- Speed — what used to take minutes now happens in seconds

- Abstraction — the attacker doesn't need to understand your apps; the agent does it for them

You're not protecting a device anymore. You're protecting something that can act as you.

What needs to change: Continuous authentication, scoped permissions, action confirmation layers, behavioral anomaly detection, remote kill switches, and time-limited delegation tokens.

The more useful agents become, the more dangerous they are in the wrong hands. Convenience only wins if safety keeps up.

Beyond the Glass Rectangle

Once we get here, the smartphone (or, at least, its LCD touchscreen) starts to feel unnecessary. If everything runs through an agent, why are we still staring at a slab of glass?

1. Ambient Audio — Your earbuds become your main interface. You speak to it. It responds. No screens needed most of the time.

2. AR Smart Glasses/Heads Up Displays — Information shows up in your world: task confirmations, directions overlaid on streets, a name tag over someone you've met before. Visual feedback will probably start frequent — we're comfortable with that from the phone era — but slowly decrease, with configurable levels: Show Everything / Only When Necessary / DND. Hints show this is the direction forward, including VisionClaw on Meta's Ray Bans.

3. BCI — "Vibe Intents" — Brain-computer interfaces let you skip speaking entirely. You don't say what you want. You don't type. You just intend it, and the system acts.

Note: In all 3 scenarios above, the smartphone doesn't necessarily have to go. Maybe the screen does, but the smartphone could act as the "pocket desktop computer", hosting your local agent with its skills and memories, and the interfaces just act as I/O.

Where We're Headed

We're moving from using computers to working with them. The shift from buttons → APIs → intent-based systems leads to something simpler:

A world where your digital environment just… responds, in English (or your native language).

That's what Vibe Interfaces will really be.

If all this comes true, just know I didn't bet money on it — and that a bunch of tech bros from Silicon Valley probably read a half-AI-generated article on explodin' gradients and decided this is how our future should look like.